Thanks to the Visual SLAM embedded in Alphasense Position your mobile machines will never get lost again!

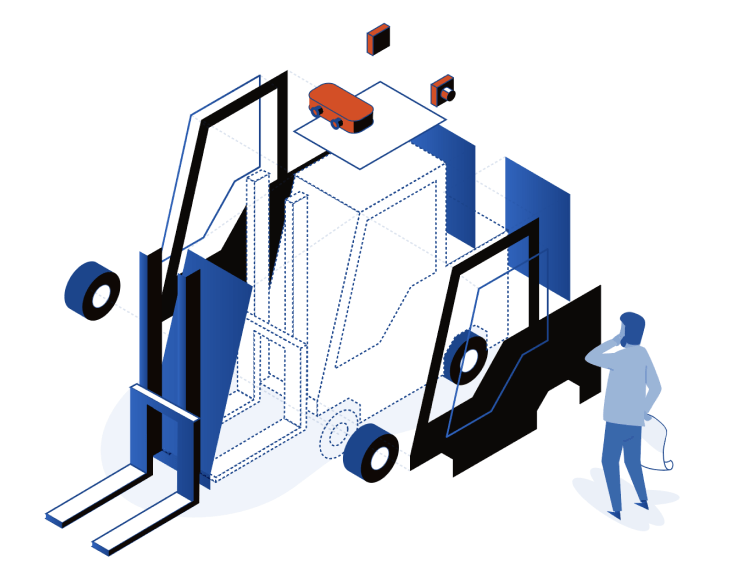

Alphasense Position is a multi-camera, industrial grade, Visual-SLAM-in-a-box solution for mobile robots. The system is an Edge AI solution that provides full 3D positioning for any kind of ground vehicles in every environment; even in fast-changing and dynamic spaces.

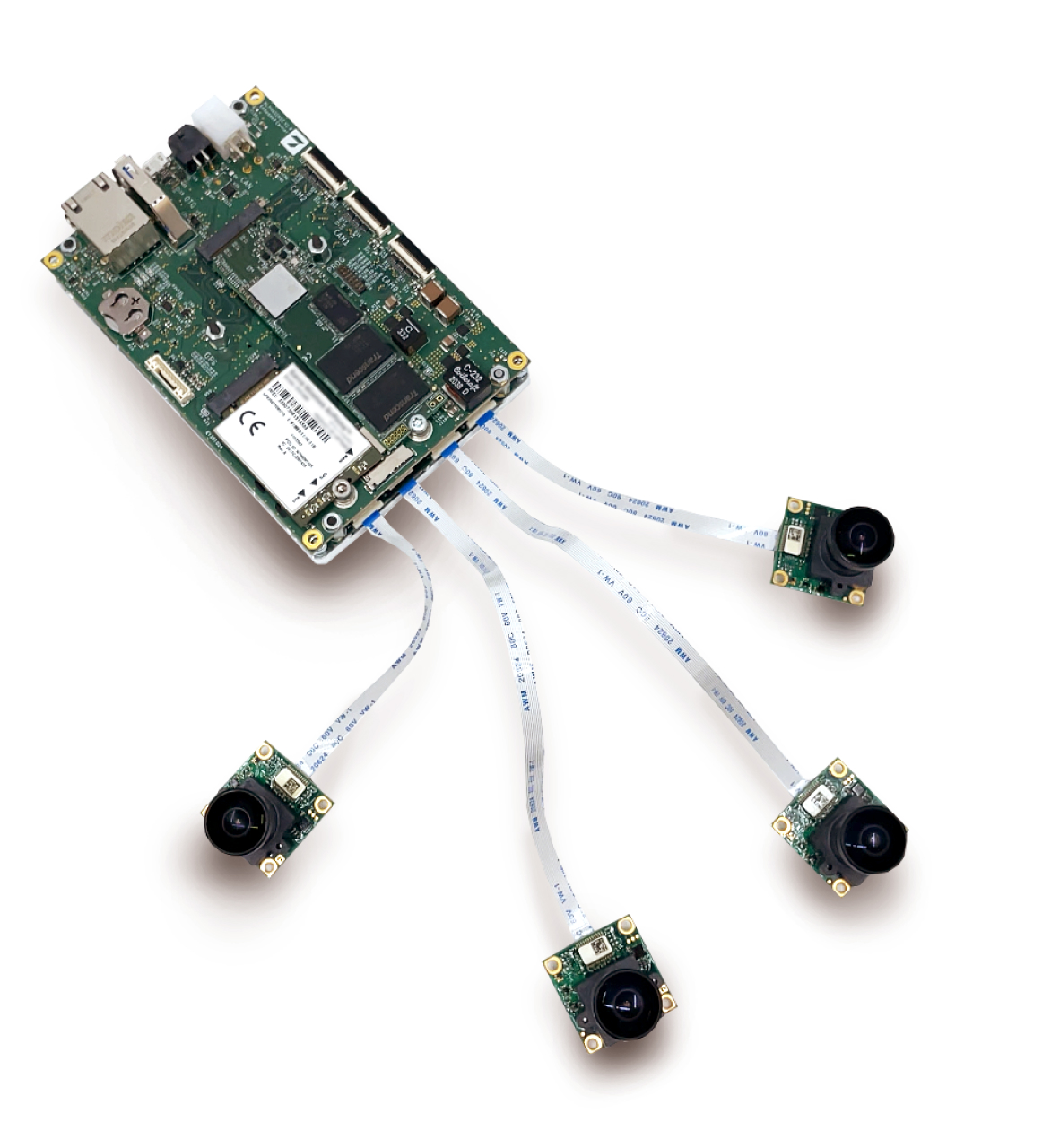

Setup with 5 calibrated cameras (casing is optional) for a fast and easy integration process.

Independent camera placement (casing is optional) allows the optimal configuration.

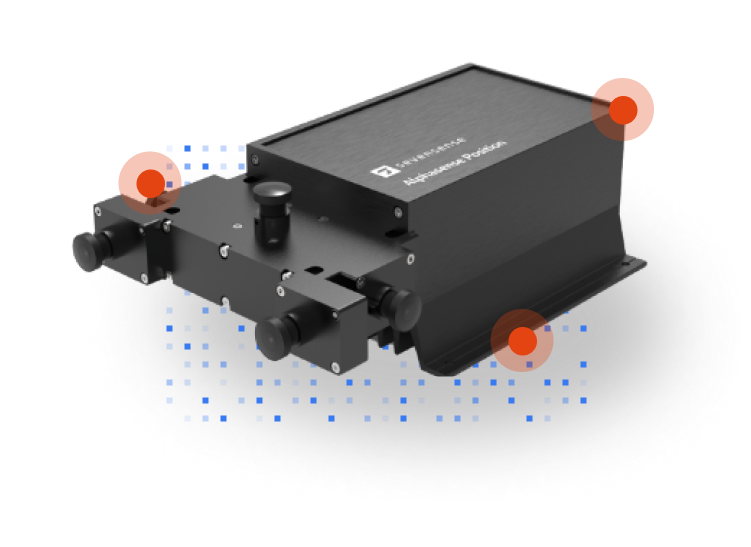

Quickly evaluate Alphasense Position and experience first-hand the unique benefits of our AI-driven Visual SLAM technology.

The Alphasense Position Evaluation Kit uses a multi-camera setup - fixed on a rigid frame and factory calibrated. This makes the installation easy and fast, even if you are unfamiliar with Visual SLAM.

Install and test Alphasense Position in less than 1 hour!

The initial setup and usage are effortless thanks to Alphasense Console - a user-friendly web interface that you can access directly from your desktop, laptop or a mobile phone.